In a scientific breakthrough, researchers identified a way to facilitate speech in a paralyzed man — by converting his thoughts into words on a computer screen.

Their findings were published in The New England Journal of Medicineon Wednesday. People who suffer paralysis from strokes, are diagnosed with ALS (Lou Gehrig’s disease), or suffer accidents may develop what is known as “anarthria” — the difficulty or inability to express thoughts in language. The research is pertinent for improving the quality of life for those who lose their ability to convert thoughts into words.

The participant in the study was one such person who, as a result of a brain-stem stroke at a very young age, was paralyzed and lost his speech functioning. In the present research, scientists used something called the “speech neuroprosthetic” — a device that was planted in the participant’s brain — to decipher what he intended to say.

“This is the first time someone just naturally trying to say words could be decoded into words just from brain activity,” Dr. David Moses, lead author of a study, said in a press release.

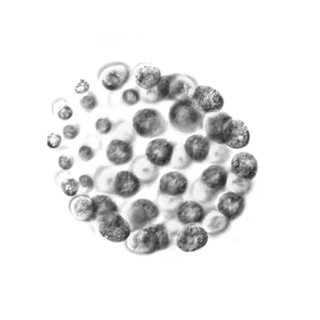

The fascinating approach unfolded like this: the researchers recorded and observed the participant’s brain activity through electrodes over the course of 48 sessions. The neuroprosthetic helped decode the brain waves that are responsible for how we speak — the brain activity associated with vocal cords, movement of the lips and tongue, and everything used to physically shape and sound out words.

Which means, the algorithms at play were able to identify words based on the participant’s brain signals — a total of 50 words that are essential for day-to-day life, such as “water,” or “good,” were used in the vocabulary set for the study. Based on preexisting language models, the algorithm could possibly follow the word the participant had in mind.

The scientists then used the words from this vocabulary set to ask the participant questions, and found that the participant was able to respond in full sentences containing words from the same vocabulary set. The scientists were able to decode complete sentences from the participant’s mind almost in real-time, with about a three-four second delay.

For instance, the question “Would you like some water?” received the response, “No, I am not thirsty.”

Related on The Swaddle:

New Findings Offer Hope for Recovery From Paralysis From Spinal Cord Injuries

“Most of us take for granted how easily we communicate through speech,” Dr. Edward Chang, co-lead author of the study, told The Guardian. Plus, the results were fairly accurate: The neuroprosthetic device also had a feature similar to the “autocorrect” feature in phones, to ensure greater accuracy.

For those with motor function loss, previous advances in prosthetics have allowed intentions of movement to be translated into action through a similar approach — that is, the intention to move or pick up something through a robotic arm was possible.

Moreover, people who lost their ability to speak had very limited and much slower options to communicate. They involved devices that can detect eye movements or, in the case of the participant in the study, pointers that could use subtle head movements to touch words or letters on a screen.

But this study goes a step further to allow thoughts themselves to be communicated directly. The new device is a faster and much more organic method of communication for users whose cognitive functioning is intact.

While the findings are promising, researchers note more research is needed to make the process faster and accurate,– and even to expand the vocabulary set. They hope to find a way for thoughts to be “spoken” through a computer-generated voice rather than being displayed on a screen.

For now, scientists and experts are excited to explore novel ways of enhancing the quality of life. Harvard neurologists Leigh Hochberg and Sydney Cash in an editorial called the discovery a “pioneering demonstration.”

The improvements can help patients of stroke, accidents, or ALS, who “know what they want to communicate — their brains prepare messages for delivery, but those messages are trapped,” Hochberg and Cash noted.

“It’s exciting to think we’re at the very beginning of a new chapter, a new field,” Edwarch Chang said.